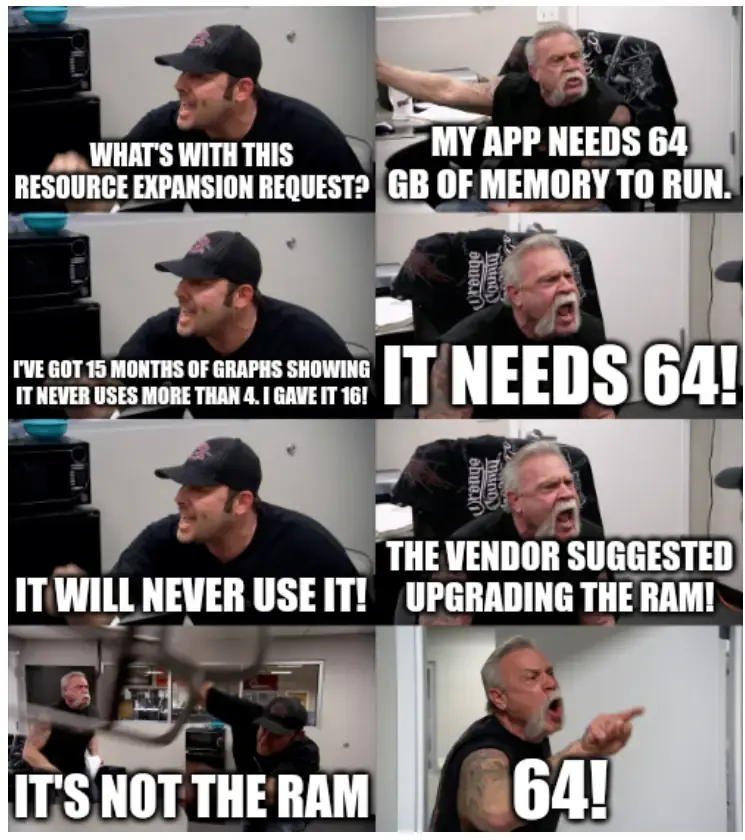

oh god i felt this one. Devs too busy, incompetent or just plain lazy to figure out why their code is so slow, so just have ops throw more CPU and memory at it to brute force performance. Then ops gets to try to explain to management why we are spending $500k per month to AWS to support 50 concurrent users.

Programmer Humor

Welcome to Programmer Humor!

This is a place where you can post jokes, memes, humor, etc. related to programming!

For sharing awful code theres also Programming Horror.

Rules

- Keep content in english

- No advertisements

- Posts must be related to programming or programmer topics

The sad thing: Throwing hardware at a problem was actually cheaper for a long time. You could buy that $1500 CPU and put it in your dedicated server, or spend 40 developer hours at $100 a pop. Obviously I'm talking about after the easy software side optimizations have already been put in (no amount of hardware will save you if you use the wrong data structures).

Nowadays you pay $500 a month for 4 measly CPU cores in Azure. Or "less than 1 core" for an SQL Server.

Obviously you have a lot more scalability and reliability in the cloud. But for $500 a month each we had a 16 core, 512 GB RAM machine in the datacenter (4 of them). That kind of hardware on AWS or Azure would bankrupt most companies in a year.

This happens all the time. Companies are bleeding money into the air every second to aws, but they have enough money to not care much.

AWS really was brilliant in how they built a cloud and how they marketed everything as "pay only for what you use".

We worked with a business unit to predict how many people they would migrate on to their new system week 1-2 … they controlled the migration through some complicated salesforce code they had written.

We were told “half a million first week”. We reserved capacity to be ready to handle the onslaught.

8000 appeared week 1.

That this is deemed brilliant is the sad part.

I mean, I would put brilliant in quotes in the way that it's brilliant for their profits. Not brilliant in the way of making the world a better place.

Do you mean that it's still the case that more resources are allocated than actually used or that the code does not need to be optimized anymore due to elastic compute?

I think both are consequences of the cloud.

It's cheaper for companies to just add more compute than to pay devs to optimize the code.

And it's also not so important to overpay for server capacity they don't use.

Both of these things leads to AWS making more money.

It's also really good for aws that once these things are built, they just keep bringing in money on their own 24 hours per day.

If I remember correctly, that was the original idea of AWS, to offer their free capacity to paying customers.

Do you think that AWS in particular has this problem or Azure and GCP as well? I have mainly worked with DWHs in Snowflake, where you can adjust the compute capacity within seconds. So you pay almost exactly for the capacity you really need.

Not having to optimize queries is a good selling point for cloud-based databases, too.

It is certainly still cheaper than self-hosted on-premises infrastructure.

Meanwhile I'm given a 16gb of ram laptop to compile Gradle projects on.

My swap file is regularly 10+ gigs. Pain.

That reminded me about trying to compile a rust application (Pika Backup) on a laptop with 4 GB of RAM (because AUR).

That was a fun couple of attempts. Eventually I just gave up and installed a flatpak.

God bless flatpack in times of need

I really hope those aren't factorials.

Depends on which crappy software vendor I'm dealing with in any given week. lol

64! is a whole lot more than 64 though. It's a number with 90 digits.

Hmm is unexpected factorial a sub here yet?

They're called “communities”, not “subs”.

subfeddit

Yeah it feels like "sub" has become something like "to google something"

Yeah, but it feels dirty.

We need to come up with a shorthand for that

Bonus if the vendor refuses to provide any further support until your department signs off on the resource expansion.

In a just world that's when you drop the vendor. In a just world.

Then we'd probably have to drop each and every vendor...😩

For some reason I love this meme.

Great. Now my left eye is twitching uncontrollably and I want to punch a sales drone into next quarter.

Flip side of the coin, I had a sysadmin who wouldn’t increase the tmp size from 1gb because ‘I don’t need more than that recommended size’. I deploy tons of etl jobs, and they download gbs of files for processing to this globally known temp storage. I got it changed for one server successfully after much back and forth, but the other one I just overrode it in my config files for every script.

This is why Java rocks with ETL, the language is built to access files via input/output streams.

It means you don't need to download a local copy of a file, you can drop it into a data lake (S3, HDFS, etc..) and pass around a URI reference.

Considering the size of Large Language Models I really am surprised at how poor streaming is handled within Python.

Yeah python does lack in such things. Half a decade ago, I setup an ml model for tableau using python, and things were fine until one day it just wouldn’t finish anymore. Turns out the model got bigger and python filled out the ram and the swap trying to load the whole model in memory.

During the pandemic I had some unoccupied python graduates I wanted to teach data engineering to.

Initially I had them implement REST wrappers around Apache OpenNLP and SpaCy and then compare the results of random data sets (project Gutenberg, sharepoint, etc..).

I ended up stealing a grad data scientist because we couldn't find a difference (while there was a difference in confidence, the actual matches were identical).

SpaCy required 1vCPU and 12GiB of RAM to produce the same result as OpenNLP that was running on 0.5 vCPU and 4.5 GiB of RAM.

2 grads were assigned a Spring Boot/Camel/OpenNLP stack and 2 a Spacy/Flask application. It took both groups 4 weeks to get a working result.

The team slowly acquired lockdown staff so I introduced Minio/RabbitMQ/Nifi/Hadoop/Express/React and then different file types (not raw UTF-8, but what about doc, pdf, etc..) for NLP pipelines. They built a fairly complex NLP processing system with a data exploration UI.

I figured I had a group to help me figure out Python best approach in the space, but Python limitations just lead to stuff like needing a Kubernetes volume to host data.

Conversely none of the data scientists we acquired were willing to code in anything but Python.

I tried arguing in my company of the time there was a huge unsolved bit of market there (e.g. MLOP's)

Alas unless you can show profit on the first customer no business would invest. Which is why I am trying to start a business.

snif

I feel so seen

The second guy is the exact same type of person as my Dad.